AI in biology

DNA, RNA, and proteins play a key role in controlling the molecular processes in our cells. Cutting-edge AI models are capable of predicting the functions of biological sequences like these and designing new ones, including AI-generated viruses. At a sandpit event organized by Wübben Stiftung Wissenschaft, experts discussed strategies for preventing misuse of the technology.

Scientists at Stanford University demonstrated in September 2025 what generative AI models are already capable of. They produced genomes of functioning bacteriophages, some of which had very low similarity to their natural role models. Bacteriophages are viruses that attack bacteria. In the lab, the synthetic virus successfully destroyed Escherichia coli bacteria, showing that generative AI models hold great potential for medical applications. For instance, AI-generated bacteriophages could offer a way of fighting multi-resistant bacteria. And protein structures generated by similar AI models could serve as a basis for therapeutic antibodies – to treat cancer, for instance.

We don't want to conjure up any nightmarish scenarios; we want to assess the potential impacts of generative AI models in the life sciences with a critical eye.

But how do we prevent these AI models from being used to design biological sequences that could be harmful? If we give our imagination free rein, we can think of countless scenarios in which this technology could be misused: A malicious agent could design a novel virus for use as a biological weapon, for example, or a pathogen that only attacks rice plant varieties that grow in a hostile neighboring country.

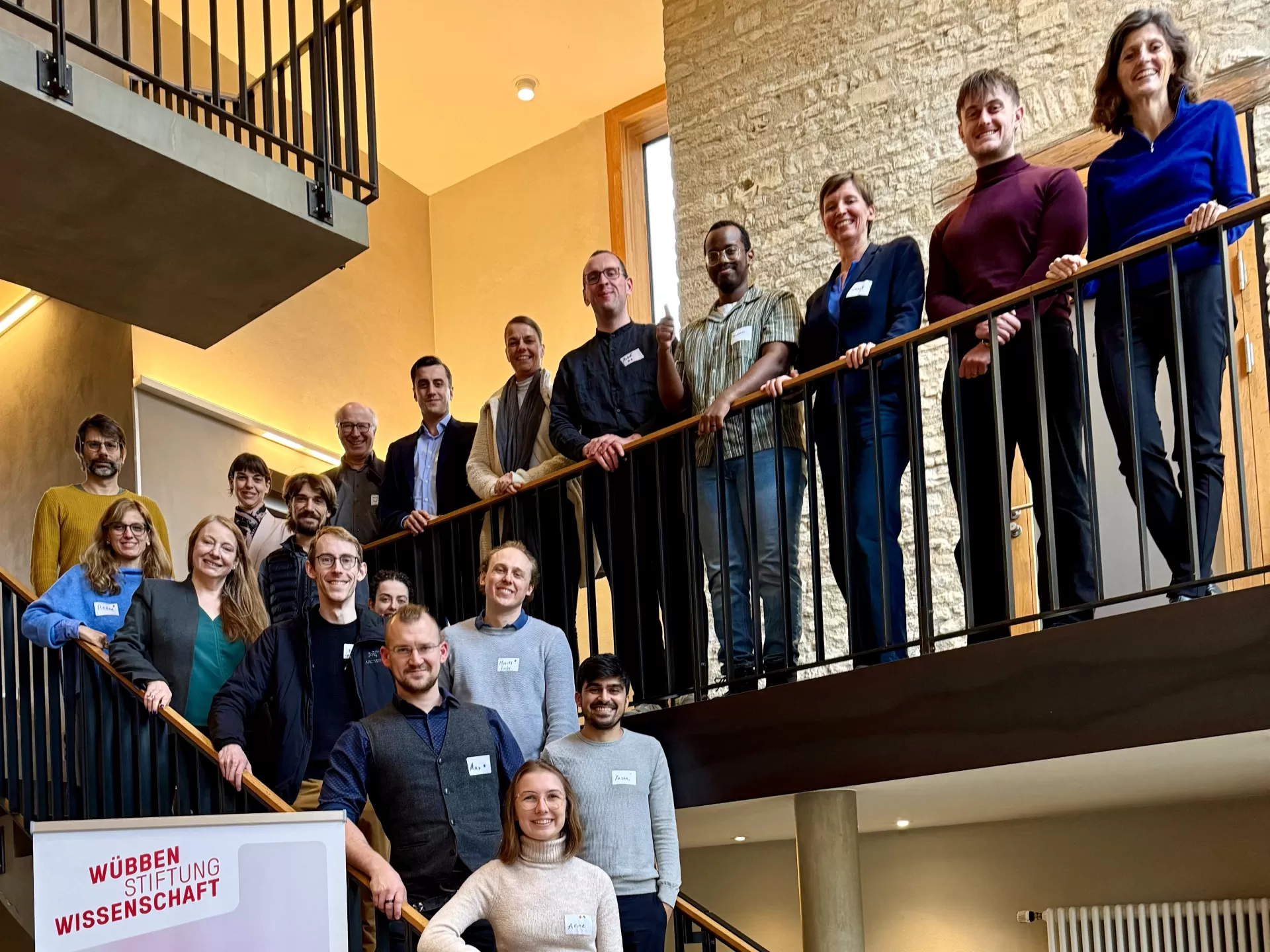

“We don't want to conjure up any nightmarish scenarios; we want to assess the potential impacts of generative AI models in the life sciences with a critical eye,” says Maximilian Sprang, a bioinformatician at Johannes Gutenberg University Mainz. In November 2025 he brought leading experts in the fields of computer science, biology, ethics, politics, and adjacent subjects together with representatives of the pharmaceuticals industry to highlight the risks that could arise from generative AI, as well as the opportunities. The focus was on dual-use scenarios, in which AI technology can be used both for life-saving medicine and deadly biological weapons. “The problem is that the models are improving at a fast pace and can be used for both good and problematic applications,” says Sprang.

The sandpit principle: Multiple disciplines and productive misunderstandings

For three days, the 24 researchers gathered in Ingelheim had the opportunity to share information on the current state of AI use in the life sciences, discuss different perspectives, and develop proposals for dealing with potential risks. One of the aims was to formulate risk-prevention strategies, draw up guidelines, and forge stronger links between technical experts and policymakers to make generative AI use safer. “Biology supplies us with the most highly developed ‘hardware’ in the universe, which we can use for future healthcare and the welfare of our planet,” says Marc Güell, one of the participants, who is a synthetic biologist at Pompeu Fabra University in Barcelona. “But we must take care to use the technology in the best way.”

The discussion took place in a sandpit, an event format that Wübben Stiftung Wissenschaft uses for in-depth exploration of current research questions in interdisciplinary teams. “AI in the life sciences is such a multifaceted topic that it is impossible to grasp with a silo approach,” says Sprang. “Thinking through the consequences requires knowledge from a wide range of different fields.”

The sandpit approach is based on three dialogue formats: short, thought-provoking “seed talks”, round-table discussions, and interactive workshops. The seed talks by experts from computer science, biotechnology, and politics brought all the participants up to speed with the current state of play in the speakers’ respective fields. The round-table discussions in various groups of different sizes facilitated an exchange of views on the challenges faced in this context, while the interactive workshops – based on design thinking – produced ideas for safer use of AI. “The small groups and their changing configurations resulted in a clash of disciplines and perspectives, which inevitably led to misunderstandings, but these were productive rather than obstructive,” says Rosae María Martín Peña, one of the participants, who is a postdoc at the Centre for Ethics and Law in the Life Sciences at Leibniz University Hannover.

Safety gaps that must be closed

Regulations and procedures for ensuring the safety of newly created biological sequences already exist. For instance, there are detection protocols that can at least prevent the involuntary generation of harmful sequences. They compare DNA that is due to be created artificially with known harmful sequences and sound the alarm if the similarity is too great. “But there are safety gaps, for instance if AI is used to exploit the gray zone between known DNA sequences and new part-sequences, and the new sequence falls through the cracks,” says Sprang. “It is not yet possible to make brand new DNA sequences, but simply reconfiguring existing DNA could be enough to evade existing safeguards and produce dangerous sequences.”

One important discussion concerned risk minimization when using AI models to help translate biomedical research into clinical practice. A lack of precision or a distortion in the underlying data can make even well-intentioned use scenarios problematic and, in the worst case, could harm patients. So we have to ask what data the AI models are using to produce their results. “The models are very data-hungry and most of the data they feed on still relates to Caucasian men, which will distort the predictions,” says Sprang. In the same way, demographic and socioeconomic differences are often not given sufficient consideration. Poverty, for instance, is a significant health factor. “If these aspects are ignored, it can lead to faulty conclusions.”

To close these kinds of safety gaps, we need ethical regulations that go beyond technical safety measures and cover the allocation of responsibilities, data protection at population level, and robust oversight mechanisms, as well as innovation.

This raises a key question: Who is responsible if something goes wrong? For example, if the wrong treatment is prescribed at a hospital on the basis of misdirected AI models. In most cases, responsibility is shared between multiple actors, and the liability situation is unclear. “To close these kinds of safety gaps, we need ethical regulations that go beyond technical safety measures and cover the allocation of responsibilities, data protection at population level, and robust oversight mechanisms, as well as innovation,” says Martín Peña.

The sandpit continues to have an impact as an active expert network

The main aim of the sandpit was, in the first place, to draw up a white paper on ethical data use and the risks of dual-use scenarios, including recommendations for minimizing these risks. “At the end of the sandpit, we worked in small groups to develop solutions to very concrete problems that will serve as the basis for the white paper,” says Sprang. “We were all surprised at how many good suggestions were produced in such a short time thanks to the design thinking approach.” The white paper, which will be sent to policymaking bodies in summer 2026, is intended to build a bridge between political and scientific and technical actors and facilitate evidence-based regulation.

AI models promise numerous sensible use scenarios for the future, but we urgently need to examine what they can really do, the risks and the obstacles.

The sandpit could also lead to the creation of a large European research project that would develop AI models to design DNA sequences with intrinsic safeguards against the risk of misuse. A number of funding applications have already been submitted or are in the planning stage. Improved mechanisms to identify harmful generated DNA sequences are another area the participants are working on. “AI models promise numerous sensible use scenarios for the future, but we urgently need to examine what they can really do, the risks and the obstacles,” says Sprang. “This is particularly important in medicine, where misuse can quickly become life-threatening.”

Maximilian Sprang has been leading a junior research group at the Medical Center of Johannes Gutenberg University Mainz since March 2025, where he combines bioinformatics and AI to uncover patterns in biological data and support translational research in immunology. At the end of 2025, he received the Einstein Foundation Early Career Award 2025 for his project “Erring Rigorously,” which aims to improve reproducibility and data reliability in functional genomics.

From initial brainstorming to research project: The Wübben Foundation sandpits are where brave new research ideas are born. Click here for more information

In conversation with: Anne Schmieder

«We can’t yet blindly rely on generative AI models, but the resources they save make them an indispensable asset.»

What perspective did you contribute to the discussion?

As a researcher in a protein design laboratory, the Schoeder Lab at Leipzig University, and as a member of the AI competence center ScaDS.AI, I work with generative AI tools every day. I participated in the sandpit to learn about different perspectives on how generative AI, or GenAI, is changing the biosciences – and to take part in interdisciplinary dialogue. In my opinion, the greatest potential of GenAI is in the fields of pharmaceuticals, medicine, and chemistry. The speed and capacity available now when running searches for potential drug candidates have increased exponentially. Experimental validation in the lab is still essential, but the early search process is much more efficient. Although I believe the benefits outweigh the risks, the greatest challenge is still interpretability. It is currently difficult to understand fully how these models process input data and how to interpret the results with 100 percent certainty. We can’t yet blindly rely on generative AI models, but the resources they save make them an indispensable asset.

Why was the sandpit a good format for advancing the discussion on generative AI?

The sandpit format was great for sparking an ongoing discussion. I particularly liked how the group work encouraged us to learn about developments outside our specific niches. The professional moderation was key. It not only kept us on track, but also enabled valuable side discussions, which led to strong connections between the participants. Since different groups were assembled for each task, we were constantly being confronted with new perspectives. This dynamic structure meant we were able to keep refining our own perspectives and improving the quality of our joint results.

What do you hope the sandpit will achieve in the long term?

I hope the sandpit will focus awareness on this topic and help spread expertise among scientists. Our home institutions will profit directly from this knowledge transfer because we can better educate colleagues who may not deal with AI or its potential risks every day. In terms of the responsible use of GenAI, I hope future projects will place an even stronger emphasis on biosafety and proactive risk assessment. It is vital that we discuss these impacts openly before any serious risks arise. For me personally, the sandpit has already been a success: I have met incredibly fascinating researchers, which has led to exciting collaborations.

Contact

Dr. Maximilian Sprang, University Medical Center of the Johannes Gutenberg University Mainz, The Mayer Lab, masprang@uni-mainz.de